SLAM Navigation for AGV and AMR: Complete Guide

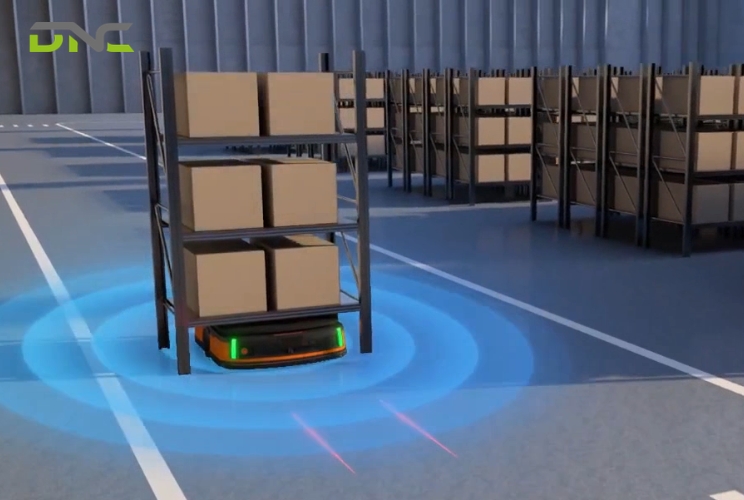

SLAM — Simultaneous Localization and Mapping — is the core navigation technology that allows autonomous mobile robots to build a digital map of your factory floor while simultaneously tracking their own position within that map. Every AGV and AMR that navigates without magnetic tape, embedded wires, or fixed reflectors depends on some variant of SLAM to move materials reliably from point A to point B.

For Malaysian manufacturers evaluating mobile robot deployments, SLAM is not an abstract computer science concept; it is the engineering foundation that determines whether your AGV or AMR fleet can handle dynamic production environments, maintain ±10–30 mm positional accuracy, and operate without the infrastructure costs of traditional guidance systems. SLAM performance directly impacts throughput, deployment speed, and total cost of ownership.

This guide breaks down how SLAM works inside AGV and AMR platforms — the sensor hardware, the algorithmic pipeline, the variants available, and the real-world challenges that Malaysian factory conditions present to SLAM-based navigation. DNC Automation’s engineering team deploys SLAM-equipped AGVs and AMRs integrated with Siemens PLC and SCADA systems across manufacturing facilities in Selangor, Johor, and Penang; the information here reflects what we see in production environments, not laboratory conditions.

What Is SLAM?

SLAM stands for Simultaneous Localization and Mapping. The term describes a computational problem: a mobile robot must construct a map of an unknown environment while simultaneously determining its own location within that map — using only onboard sensors and processing power. Neither the map nor the robot’s position is known in advance; both are estimated concurrently.

The SLAM problem was formalized in the robotics research community in 1986, when Randall Smith, Matthew Self, and Peter Cheeseman presented the foundational probabilistic framework at the IEEE International Conference on Robotics and Automation. Hugh Durrant-Whyte and John Leonard advanced the field significantly through the 1990s, establishing the Extended Kalman Filter (EKF) approach that became the first practical SLAM implementation. By the early 2000s, particle filter methods (FastSLAM) and graph-based optimization techniques expanded SLAM capabilities beyond what EKF could handle.

SLAM AGV and SLAM AMR systems in modern factory automation use mature, commercially proven implementations — not experimental algorithms. Platforms from KUKA, MiR, Omron, and Locus Robotics deploy SLAM variants that have been refined across millions of operating hours in warehouse and manufacturing environments. The technology is production-grade.

The critical distinction for factory automation: SLAM eliminates the need for external infrastructure. A SLAM-equipped AGV does not require magnetic tape on the floor, wires embedded beneath the surface, or laser reflectors mounted on walls. The robot builds and maintains its own spatial reference using onboard LiDAR, cameras, IMUs, and wheel encoders — reducing deployment time from weeks to days and eliminating infrastructure maintenance costs entirely.

How SLAM Works in AGV and AMR Systems

SLAM operates as a continuous cycle of four interdependent processes: mapping, localization, scan matching, and loop closure. Understanding each process explains why SLAM AGV and SLAM AMR systems behave the way they do on your factory floor.

Mapping Phase

During initial deployment, an operator drives the AGV or AMR through the facility while SLAM software constructs the first complete map. The robot’s LiDAR sensor emits thousands of laser pulses per second — a typical 2D LiDAR like the SICK TiM781 fires 15,000 points per scan at 15 Hz — and measures the time each pulse takes to return after reflecting off walls, racking, machinery, and other static features. These distance measurements form a 2D point cloud representing the environment’s geometry.

The SLAM algorithm assembles sequential point clouds into a coherent spatial model. Each new scan is aligned with previous scans using probabilistic estimation — the algorithm calculates the most likely position and orientation of each scan relative to the accumulated map. The result is an occupancy grid map: a 2D representation where each cell (typically 5 cm resolution) is classified as occupied, free, or unknown.

For a standard 10,000 m² manufacturing floor, initial mapping takes 2–4 hours. The map captures static structural features — walls, columns, fixed machinery, permanent racking — that serve as reliable navigation landmarks.

Localization

After the initial map exists, SLAM shifts to localization mode during normal operations. The AGV or AMR continuously compares its live sensor data against the stored map to determine its current position and heading. This comparison runs at 10–50 Hz depending on the platform — the robot recalculates its position 10 to 50 times per second.

Localization uses a probabilistic technique called Monte Carlo Localization (MCL), also known as particle filtering. The algorithm maintains hundreds or thousands of position hypotheses (“particles”), each representing a possible robot location. As new sensor data arrives, particles that match the observed environment gain probability weight; particles that contradict the sensor data lose weight. The highest-weighted cluster of particles represents the robot’s estimated position.

Localization accuracy for industrial SLAM AGV platforms typically achieves ±10–20 mm in static environments with good geometric features. In open areas with few distinctive landmarks — large empty warehouse aisles, for example — accuracy can degrade to ±30–50 mm.

Scan Matching

Scan matching is the algorithmic engine that aligns each new sensor scan to the existing map. The most widely used algorithm in industrial SLAM is Iterative Closest Point (ICP), which finds the geometric transformation — translation and rotation — that best aligns the current scan’s points with the map’s reference points.

The process works iteratively: ICP proposes an initial alignment, calculates the error between corresponding points, adjusts the transformation to reduce that error, and repeats until convergence. A single scan match typically converges in 20–50 iterations, completing in under 10 milliseconds on modern embedded processors (Intel i5/i7 class or equivalent ARM platforms).

Advanced SLAM implementations use Normal Distributions Transform (NDT) scan matching, which represents the map as a grid of normal distributions rather than raw points. NDT scan matching handles noisy environments more robustly than ICP — a meaningful advantage in Malaysian factories where dust, humidity, and reflective surfaces can degrade raw LiDAR point quality.

Loop Closure

Loop closure is the process that corrects accumulated drift when the robot returns to a previously visited location. Every SLAM system accumulates small errors with each scan match — a 0.1-degree heading error, compounded over 500 meters of travel, produces a 0.87 m position offset. Without loop closure, the map gradually distorts.

When the robot recognizes that its current sensor data matches a previously mapped location, the loop closure algorithm calculates the accumulated error and distributes corrections backward through the entire trajectory. Graph-based SLAM formulations handle loop closure through pose graph optimization — each robot position is a node, each scan match is an edge, and loop closure adds a constraint that forces the graph to resolve its accumulated inconsistencies.

Effective loop closure is what separates industrial-grade SLAM from research prototypes. In a 50,000 m² distribution center, a SLAM AMR may travel 15–20 km between charging cycles; without robust loop closure, map integrity degrades within hours. Production SLAM platforms implement loop closure detection at every trajectory intersection, maintaining map consistency across continuous multi-shift operations.

Types of SLAM for Mobile Robot Navigation

SLAM implementations vary based on the primary sensor modality, the mathematical framework, and the computational architecture. Each variant presents different trade-offs for factory automation applications.

LiDAR SLAM

LiDAR SLAM uses laser rangefinder data as the primary input. 2D LiDAR SLAM — the dominant approach in industrial AGV and AMR platforms — processes planar laser scans to build and maintain 2D occupancy grid maps. Algorithms include GMapping (particle filter-based), Hector SLAM (scan matching without odometry), and Cartographer (Google’s graph-based approach).

LiDAR SLAM delivers the highest geometric accuracy in structured environments: ±10 mm in facilities with distinct walls, columns, and fixed equipment. It operates independently of lighting conditions — LiDAR functions identically in complete darkness and full lighting. Processing requirements are moderate; 2D LiDAR SLAM runs comfortably on embedded ARM processors.

Limitation: LiDAR SLAM struggles in geometrically repetitive environments — long, featureless corridors where every scan looks identical. It also cannot distinguish between objects at different heights using 2D sensors alone.

Visual SLAM (vSLAM)

Visual SLAM uses camera imagery as the primary input. The algorithm extracts visual features — corners, edges, texture patterns — from sequential camera frames and tracks their movement to estimate the robot’s trajectory and build a 3D feature map. ORB-SLAM3 and RTAB-Map are the most widely deployed visual SLAM frameworks.

Visual SLAM provides rich environmental understanding — the system can identify specific objects, read signs, and distinguish between visually distinct but geometrically similar areas. Camera hardware costs less than LiDAR; a stereo camera pair costs USD 200–500 versus USD 1,500–8,000 for industrial-grade LiDAR.

Limitation: Visual SLAM is sensitive to lighting changes. A factory that runs day shifts under natural light and night shifts under artificial light presents two different visual environments — the algorithm must handle both. Processing requirements are significantly higher than LiDAR SLAM; visual feature extraction and matching demands GPU-class computing power.

RGB-D SLAM

RGB-D SLAM combines color camera imagery with per-pixel depth data from structured-light or time-of-flight depth sensors (Intel RealSense D435, Microsoft Azure Kinect). The depth channel provides direct distance measurements — eliminating the computational cost of stereo depth estimation — while the RGB channel provides visual features for place recognition.

RGB-D SLAM excels in close-range, indoor environments where depth sensor range (0.3–10 m typical) is sufficient. It produces dense 3D maps useful for obstacle avoidance and manipulation tasks.

Limitation: Depth sensor range is too short for large-scale factory mapping. Infrared-based depth sensors (structured light and active stereo) fail under direct sunlight and interfere with each other when multiple robots operate in close proximity.

Graph-Based SLAM

Graph-based SLAM is a mathematical framework — not a sensor type — that can use any sensor modality. It represents the SLAM problem as a graph where nodes are robot poses and edges are spatial constraints derived from sensor measurements. Optimization algorithms (g2o, GTSAM, Ceres Solver) adjust all node positions simultaneously to minimize the total constraint error.

Graph-based approaches handle loop closure more elegantly than filter-based methods and scale better to large environments. Google Cartographer, the most widely adopted open-source SLAM framework for industrial applications, uses a graph-based architecture.

SLAM Type Comparison

| Characteristic | LiDAR SLAM | Visual SLAM | RGB-D SLAM | Graph-Based |

| Primary sensor | 2D/3D LiDAR | Mono/stereo camera | Depth camera + RGB | Any (framework) |

| Accuracy (indoor) | ±10–20 mm | ±30–50 mm | ±20–40 mm | Depends on sensor |

| Lighting dependency | None | High | Moderate | Depends on sensor |

| Compute requirement | Low–moderate | High (GPU) | Moderate | Moderate–high |

| Sensor cost (USD) | 1,500–8,000 | 200–500 | 300–1,200 | N/A |

| Best factory use | Primary navigation | Supplementary recognition | Close-range 3D | Large-scale maps |

| Dominant in AGV/AMR | Yes — 90%+ industrial platforms | Emerging — multi-sensor fusion | Niche — manipulation tasks | Backend optimizer |

LiDAR SLAM remains the standard for industrial SLAM AGV and SLAM AMR deployments. Visual SLAM and RGB-D SLAM increasingly appear as supplementary modalities in sensor fusion architectures — providing richer environmental context alongside LiDAR’s geometric precision.

Mobile Robot Sensors for SLAM Navigation

SLAM algorithm performance depends entirely on sensor hardware quality. The sensors mounted on an AGV or AMR determine mapping resolution, localization accuracy, obstacle detection range, and environmental robustness. Understanding mobile robot sensors helps your engineering team evaluate platform specifications against your facility’s requirements.

2D LiDAR

2D LiDAR is the primary navigation sensor on 90%+ of industrial AGVs and AMRs. The sensor emits a planar fan of laser pulses and measures return time to calculate distances — producing a 2D cross-section of the environment at the sensor’s mounting height (typically 200–300 mm above floor level).

Industrial-grade 2D LiDAR units (SICK TiM series, Hokuyo UST series, SLAMTEC RPLidar) deliver 10–25 m range, 0.25–1.0-degree angular resolution, and 10–40 Hz scan rates. Safety-rated 2D LiDAR (SICK microScan3, Omron OS32C) combines navigation data with personnel detection for ISO 3691-4 compliance — one sensor handles both SLAM input and safety zone monitoring.

3D LiDAR

3D LiDAR generates volumetric point clouds by scanning multiple laser planes simultaneously. Sensors like the Velodyne VLP-16 (16 channels, 100 m range, 300,000 points/second) and Ouster OS1-32 (32 channels, 120 m range) provide height information that 2D LiDAR cannot capture — detecting overhead obstacles, elevated conveyors, and loading dock ramps.

3D LiDAR appears primarily on outdoor AGVs and large-scale warehouse AMRs where the additional spatial data justifies the USD 4,000–15,000 per-unit sensor cost. Most indoor factory AGVs operate effectively with 2D LiDAR alone.

Depth Cameras

Stereo cameras (Intel RealSense D435i, Stereolabs ZED 2) and structured-light sensors provide dense depth data at 30–90 fps across a 0.3–10 m range. These sensors supplement LiDAR-based SLAM with close-range 3D obstacle detection — identifying objects below the LiDAR scan plane (floor-level pallets, low carts) and above it (hanging cables, overhead structures).

Depth cameras also enable visual place recognition, which strengthens SLAM robustness in geometrically ambiguous environments where LiDAR scans alone cannot distinguish between similar-looking locations.

Inertial Measurement Unit (IMU)

A 6-axis IMU (3-axis accelerometer + 3-axis gyroscope) provides high-frequency motion data — typically 100–400 Hz — that bridges the gap between slower LiDAR scans. When the robot moves between LiDAR updates (every 67–100 ms at 10–15 Hz), the IMU predicts the robot’s trajectory, providing a motion prior that improves scan matching convergence speed and accuracy.

IMU data is particularly critical during rapid acceleration, deceleration, and turning — moments when odometry from wheel encoders becomes unreliable due to wheel slip.

Wheel Encoders

Optical or magnetic encoders on the drive wheels measure rotation — converting wheel revolutions into distance traveled and heading change. Encoder data provides the primary odometry input for SLAM: the initial estimate of how far and in what direction the robot has moved between sensor scans.

Encoder accuracy degrades on smooth, polished floors (common in Malaysian electronics and pharmaceutical factories) where wheel slip increases. SLAM algorithms compensate by weighting encoder data lower and relying more heavily on LiDAR scan matching and IMU fusion when slip is detected.

Ultrasonic Sensors

Ultrasonic transducers emit sound pulses and measure echo return time — detecting objects at 0.2–5 m range regardless of surface material, color, or transparency. Ultrasonic sensors detect glass walls, transparent barriers, and highly reflective surfaces that LiDAR may miss (specular reflection can cause LiDAR returns to scatter rather than reflect back to the sensor).

Most industrial AGVs mount 4–8 ultrasonic sensors around the chassis perimeter as a supplementary safety and obstacle detection layer — not as SLAM input, but as a collision avoidance backup.

Mobile Robot Sensor Specifications

| Sensor Type | Range | Update Rate | Accuracy | Cost (USD) | SLAM Role |

| 2D LiDAR (navigation) | 10–25 m | 10–40 Hz | ±30 mm | 1,500–5,000 | Primary mapping + localization |

| 2D LiDAR (safety-rated) | 5–9 m | 40–80 Hz | ±40 mm | 2,000–6,000 | Safety zones + navigation |

| 3D LiDAR | 30–120 m | 10–20 Hz | ±20 mm | 4,000–15,000 | 3D mapping, outdoor use |

| Depth camera | 0.3–10 m | 30–90 fps | ±2 mm at 1 m | 200–1,200 | Close-range 3D, visual features |

| IMU (6-axis) | N/A | 100–400 Hz | 0.1°/s drift | 50–500 | Motion prediction, scan prior |

| Wheel encoder | N/A | 1,000+ Hz | ±1 mm/m | 30–200 | Odometry, distance tracking |

| Ultrasonic | 0.2–5 m | 10–40 Hz | ±10 mm | 10–50 | Transparent object detection |

The sensor suite on a typical industrial SLAM AGV costs USD 5,000–15,000 — representing 15–25% of the total vehicle hardware cost. Sensor selection directly determines the robot’s navigation capability in your specific factory environment.

SLAM vs Traditional AGV Navigation

Traditional AGV navigation relies on physical infrastructure embedded in or mounted throughout the facility. SLAM-based navigation relies on onboard sensors and computation. The two approaches present fundamentally different trade-offs for deployment, maintenance, and operational flexibility.

Wire-guided navigation requires cutting channels into the factory floor and embedding current-carrying wires. The AGV follows the electromagnetic field. Installation costs USD 15–50 per linear meter; a 500 m route network costs USD 7,500–25,000 in infrastructure alone. Any route change requires floor cutting, wire installation, and resurfacing — typically 2–5 days of production downtime per modification.

Magnetic tape navigation uses ferrite tape adhered to the floor surface. Installation is faster (USD 5–15 per linear meter) but tape wears under forklift traffic, requires regular replacement (every 6–12 months in high-traffic zones), and cannot span areas where floor washing or chemical exposure degrades adhesive.

Laser reflector navigation mounts retroreflective targets on walls and columns. The AGV’s rotating laser scanner measures angles to 3+ reflectors simultaneously, triangulating its position to ±1–5 mm accuracy. Reflector systems deliver the highest precision of any navigation method but require line-of-sight to multiple reflectors at all times — problematic in facilities with dense racking or frequent layout changes that obstruct sight lines.

SLAM navigation eliminates all physical infrastructure. The robot maps and navigates using the facility’s existing structural features. Deployment time drops from weeks to days; route changes are software updates taking minutes, not construction projects taking days. The trade-off: SLAM accuracy (±10–30 mm) is lower than wire-guided (±1 mm) or laser reflector (±1–5 mm) systems, and SLAM performance depends on environmental conditions that physical infrastructure systems are immune to.

| Factor | Wire-Guided | Magnetic Tape | Laser Reflector | SLAM |

| Infrastructure cost | High | Moderate | Moderate | None |

| Positional accuracy | ±1 mm | ±5 mm | ±1–5 mm | ±10–30 mm |

| Route change time | Days | Hours | Hours | Minutes |

| Maintenance burden | Floor repair | Tape replacement | Reflector cleaning | Software updates |

| Deployment time | 4–8 weeks | 1–3 weeks | 2–4 weeks | 1–3 days |

| Dynamic obstacle handling | Stop only | Stop only | Stop only | Reroute dynamically |

For Malaysian manufacturers running mixed-product facilities with annual layout changes, SLAM navigation delivers the strongest ROI through eliminated infrastructure costs and near-zero route change downtime. For fixed-line automotive or semiconductor production where ±1 mm docking precision is non-negotiable, traditional wire-guided or laser reflector navigation remains the appropriate choice.

Challenges of SLAM in Malaysian Factories

Malaysian manufacturing facilities present specific environmental conditions that challenge SLAM performance. Understanding these challenges before deployment prevents costly troubleshooting after installation.

Reflective and Transparent Surfaces

Stainless steel equipment, glass partition walls, polished epoxy floors, and aluminum-clad machinery create specular reflections that scatter LiDAR returns. Instead of bouncing directly back to the sensor, laser pulses reflect at oblique angles — producing ghost points, phantom walls, or missing returns in the point cloud. In food and beverage facilities (a major Malaysian manufacturing sector), stainless steel surfaces can occupy 30–50% of the LiDAR’s field of view.

Mitigation: Multi-sensor fusion combining LiDAR with depth cameras and ultrasonic sensors. Configure SLAM software to filter outlier points exceeding expected distance thresholds. In extreme cases, apply anti-reflective tape or matte coatings to the most problematic surfaces — a USD 500–2,000 investment that can resolve persistent localization failures.

Dynamic Environments

Malaysian factories frequently operate with mixed traffic — forklifts, manual carts, walking personnel, and temporary material staging areas that change shift by shift. SLAM algorithms must distinguish between static features (walls, fixed machinery) that form the navigation map and dynamic objects (people, vehicles, temporary pallets) that should be treated as obstacles, not landmarks.

If the SLAM algorithm incorporates dynamic objects into the map, the map degrades — “phantom” features appear and disappear, confusing subsequent localization. Factories with high traffic density in narrow aisles (common in Malaysian electronics manufacturing with compact floor plans) face the highest risk of dynamic object contamination.

Mitigation: Configure SLAM to use conservative map update policies — require features to persist across multiple scans before incorporating them into the reference map. Deploy platforms with dedicated dynamic object filtering (MiR’s AMR platform, for example, maintains separate static and dynamic environment layers).

RF Interference and Environmental Noise

Dense PLC networks, variable frequency drives (VFDs), high-power welding equipment, and 5 GHz Wi-Fi access points generate electromagnetic interference that can affect sensor communication buses (Ethernet, USB) and Wi-Fi-dependent fleet management links. Malaysian factories integrating AGVs with Siemens S7-1500 PLCs via PROFINET must ensure network segmentation prevents SLAM sensor data packets from competing with PLC control traffic on the same subnet.

Acoustic noise from stamping presses, CNC machines, and pneumatic systems does not directly affect LiDAR or camera SLAM — but can degrade ultrasonic sensor performance, reducing the effectiveness of supplementary obstacle detection.

Mitigation: Industrial-rated sensor hardware with shielded communication interfaces. Dedicated VLAN for robot navigation traffic, separated from PLC/SCADA networks. Ultrasonic sensors with adjustable frequency and gain to operate above factory acoustic noise floors.

Heat and Humidity

Malaysian ambient temperatures reach 35–38°C with 80–95% relative humidity. Indoor factory temperatures in non-climate-controlled areas (warehouses, loading docks, raw material storage) can exceed 40°C. LiDAR optical components and camera lenses can accumulate condensation during rapid temperature transitions — a robot moving from an air-conditioned cleanroom (22°C) to an adjacent non-cooled warehouse (38°C) may experience lens fogging that temporarily blinds the sensor.

Mitigation: Specify IP65-rated sensor enclosures with internal heating elements for condensation prevention. Allow 2–3 minutes of sensor acclimatization time when robots transition between temperature zones. Industrial LiDAR units from SICK and Hokuyo are rated for 0–50°C operation and handle Malaysian conditions without performance degradation when properly housed.

Frequently Asked Questions

What does SLAM stand for in AGV navigation?

SLAM stands for Simultaneous Localization and Mapping. In AGV navigation, SLAM refers to the onboard software system that builds a digital map of the factory environment using LiDAR sensor data while simultaneously tracking the AGV’s position within that map — enabling infrastructure-free navigation without magnetic tape, embedded wires, or mounted reflectors.

How accurate is SLAM navigation compared to wire-guided AGVs?

SLAM navigation achieves ±10–30 mm positional accuracy in typical industrial environments — sufficient for material transport, pallet handling, and most docking operations. Wire-guided AGVs achieve ±1 mm accuracy. Laser reflector systems achieve ±1–5 mm. For applications requiring sub-5 mm docking precision (semiconductor wafer transport, precision machine loading), SLAM alone may not meet tolerance requirements; hybrid approaches combining SLAM navigation with fine-positioning sensors (vision-based docking, inductive guides at stations) bridge this gap.

Can SLAM AGVs work in the dark?

Yes. LiDAR-based SLAM operates independently of ambient lighting — the laser rangefinder provides its own illumination source and measures reflected pulse timing, not visible light. A SLAM AGV using 2D LiDAR navigates identically in complete darkness, standard factory lighting, and bright sunlight. Visual SLAM (camera-based) does require adequate lighting; facilities deploying vSLAM-equipped robots must maintain consistent illumination or supplement with infrared lighting systems.

How long does it take to map a factory for SLAM navigation?

Initial factory mapping for a SLAM-equipped AGV or AMR typically requires 2–4 hours for a 10,000 m² facility. An operator drives the robot through all navigation zones at walking speed (3–5 km/h) while the SLAM software constructs the occupancy grid map. Post-mapping, the software processes the raw data and generates the navigation-ready map — an additional 30–60 minutes of computation time. Total deployment from unboxing to first autonomous run: 1–3 days, including map creation, zone configuration, destination programming, and integration testing.

What happens when the factory layout changes?

SLAM-equipped AGVs and AMRs handle layout changes through map updates — not infrastructure rework. Minor changes (moved pallets, rearranged staging areas) are absorbed automatically by the robot’s real-time localization, which treats changed features as obstacles and navigates around them. Major changes (new walls, removed machinery, reconfigured production lines) require a partial or full remap — an operator drives the robot through the affected area, and the SLAM software integrates the new geometry into the existing map. A partial remap takes 30–60 minutes; the robot resumes autonomous operation immediately after map update.

Which SLAM type is best for factory AGVs?

2D LiDAR SLAM is the standard for indoor factory AGV and AMR navigation — used by 90%+ of commercially deployed platforms. It delivers the optimal balance of accuracy (±10–20 mm), computational efficiency (runs on embedded processors), environmental robustness (lighting-independent), and sensor cost (USD 1,500–5,000 per LiDAR unit). 3D LiDAR SLAM is justified for outdoor AGVs or large warehouses with significant elevation changes. Visual SLAM supplements LiDAR SLAM in sensor fusion architectures but rarely serves as the sole navigation modality for industrial transport robots.

How does SLAM integrate with factory PLC and SCADA systems?

SLAM handles navigation — PLC and SCADA systems handle task coordination. The integration architecture connects the AGV/AMR fleet management software to the factory’s Siemens (or equivalent) PLC via industrial Ethernet protocols — PROFINET, OPC UA, or Modbus TCP. The PLC sends transport commands (pick from Station A, deliver to Station B); the fleet manager assigns tasks to specific vehicles; SLAM handles the navigation execution autonomously. DNC Automation implements this integration using Siemens TIA Portal for PLC programming and fleet management APIs for bidirectional communication — ensuring the mobile robot fleet operates as a coordinated element within the broader factory automation architecture.

Is SLAM reliable enough for 24/7 factory operations?

Production-grade SLAM implementations run 24/7 across thousands of facilities worldwide. Reliability depends on three factors: sensor hardware quality (industrial-rated LiDAR with 50,000+ hour MTBF), map maintenance discipline (scheduled remaps when major layout changes occur), and environmental suitability (sufficient geometric features for localization). In DNC Automation’s deployments, SLAM-equipped AGVs and AMRs operate across 3-shift, 24/7 production schedules with 98%+ uptime — matching or exceeding the availability of traditional guided AGV systems that face their own downtime from infrastructure damage (torn tape, misaligned reflectors, wire breaks).

Conclusion

SLAM navigation transforms AGVs and AMRs from infrastructure-dependent transport vehicles into intelligent, adaptable mobile robots that map, localize, and navigate your factory autonomously. The technology eliminates physical guidance infrastructure, reduces deployment timelines from weeks to days, and enables route changes through software updates — not construction projects.

Malaysian manufacturers implementing SLAM-based mobile robots gain flexibility that fixed-infrastructure navigation cannot deliver: rapid redeployment across changing production layouts, zero infrastructure maintenance costs, and dynamic obstacle handling that maintains throughput in mixed-traffic environments.

DNC Automation’s 35+ engineers deploy and integrate SLAM-equipped AGV and AMR systems with Siemens PLC, SCADA, and MES platforms across manufacturing facilities in Malaysia. Our team handles sensor selection, SLAM configuration, fleet management integration, and PLC communication architecture — delivering a complete mobile robot system, not just hardware.

- 4 views

- 0 Comment

Recent Comments